Solution

Runpod makes GPU infrastructure simple.

Runpod is the end-to-end AI cloud that

simplifies building and deploying models.

Go from idea to deployment in a single flow.

Runpod simplifies every step of your workflow—so you can build, scale, and optimize without ever managing infrastructure.

Enterprise grade uptime.

Runpod handles failovers, ensuring your workloads run smoothly—even when resources don’t.

Managed orchestration.

Runpod Serverless queues and distributes tasks seamlessly, saving you from building orchestration systems.

Real-time logs.

Get real-time logs, monitoring, and metrics—no custom frameworks required.

Features

Scale with Serverless when you're ready for production.

Powerful compute, effortless deployment.

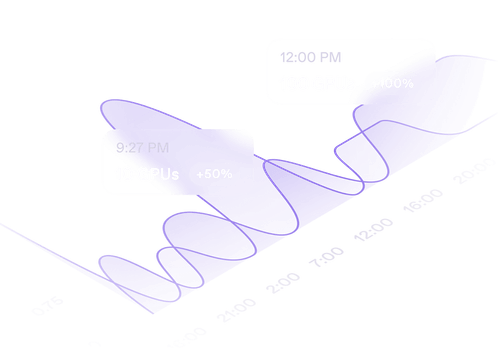

Autoscale in seconds

Instantly respond to demand with GPU workers that scale from 0 to 1000s in seconds.

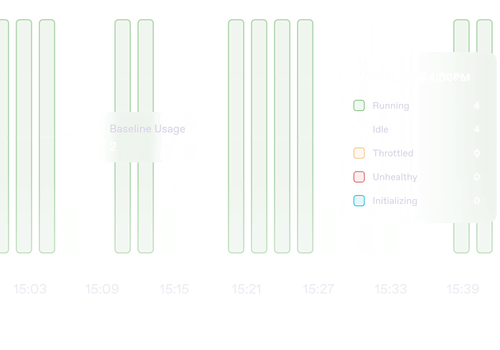

Zero cold-starts with active workers

Always-on GPUs for uninterrupted execution.

<200ms cold-start with FlashBoot

Lightning-fast scaling with

sub-200ms cold-starts.

Persistent network storage

Run full AI pipelines—data ingestion to deployment—without egress fees on our S3 compatible storage.

Case Studies

Loved by developers.

But don’t just take it from us.

-p-500.webp)